Scoping, rules of engagement, and the real difference between pentesting, vulnerability scanning, and red teaming.

| Note: All screenshots in this article are illustrative examples built from fictional data. They are included to show what good scope, rules of engagement, and service-selection artifacts can look like. |

Ask five stakeholders what a pentest is supposed to do and you will often get five different answers. One person expects a compliance checkbox. Another expects a clean bill of health. A third expects a Hollywood-style simulated breach. That expectation gap is exactly why scoping and rules of engagement matter so much.

A pentest is not a promise that your environment is secure. It is a controlled, authorized attempt to identify and validate exploitable paths inside an agreed scope and under agreed constraints. NIST describes vulnerability scanning as a technique used to identify hosts, host attributes, and associated vulnerabilities [1]. NIST also describes penetration testing as security testing in which evaluators mimic real-world attacks to identify ways around the security features of an application, system, or network [2].

| Key idea: A pentest does not answer, “Are we secure?” It answers, “What can a skilled tester validate inside this scope, under this ruleset, during this timebox?” |

What a pentest actually covers

A well-run pentest usually covers four things:

• Named targets and boundaries. The engagement should identify which applications, APIs, hosts, IP ranges, cloud assets, wireless networks, or internal segments are fair game.

• Agreed test conditions. The client and tester should know whether the test is black-box, gray-box, or white-box; whether credentials are provided; and whether production systems are being touched.

• Controlled exploitation and validation. Pentesters do not just list theoretical weaknesses. They try to validate whether flaws are reachable, exploitable, and chainable, without crossing safety boundaries.

• Actionable reporting. The output should include evidence, impact, attack paths, remediation guidance, and often a retest plan for fixed issues.

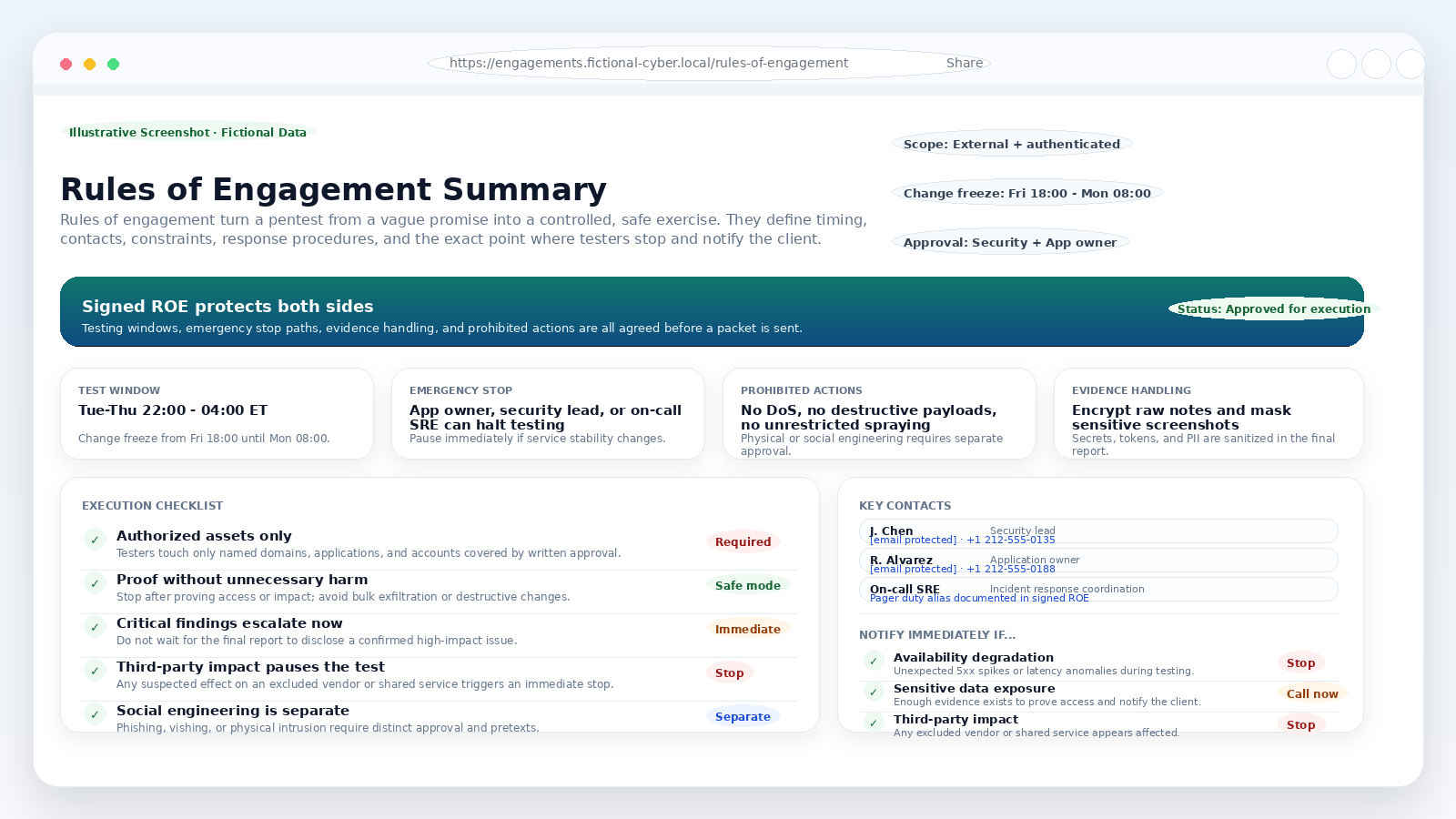

Figure 1. Illustrative screenshot: a fictional scope register showing in-scope targets, exclusions, and objectives.

Why scoping matters more than most teams realize

A lot of pentest disappointment is really scoping failure. If the statement of work says “external pentest,” but nobody clarifies whether the public API, identity provider, admin panel, or third-party payment flow is included, the final report may be technically correct and still feel useless.

Good scoping should make six things obvious before testing begins:

• Which exact assets are in scope, including domains, IP ranges, mobile backends, cloud accounts, or internal segments.

• Which assets are out of scope, especially third-party services that require separate authorization.

• What access model applies: black-box, gray-box, white-box, or some role-based combination of those.

• Which attack paths are allowed, such as authenticated application testing, tenant-boundary checks, wireless testing, or safe privilege-escalation attempts.

• Which actions are prohibited, including destructive payloads, denial-of-service, broad password spraying, or persistent footholds in production.

• What counts as sufficient proof, so testers know when to stop after validating impact instead of pushing further than the client needs.

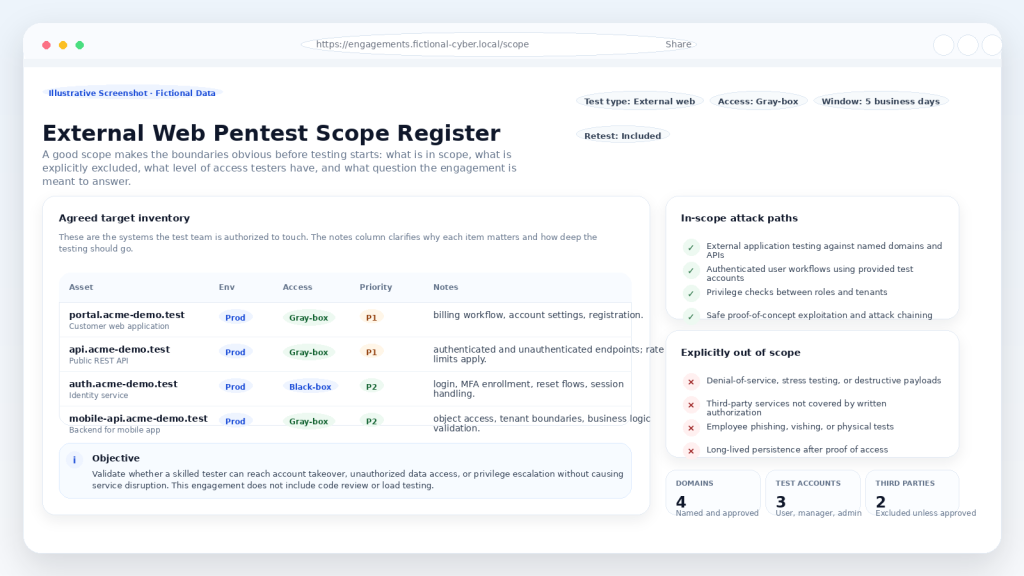

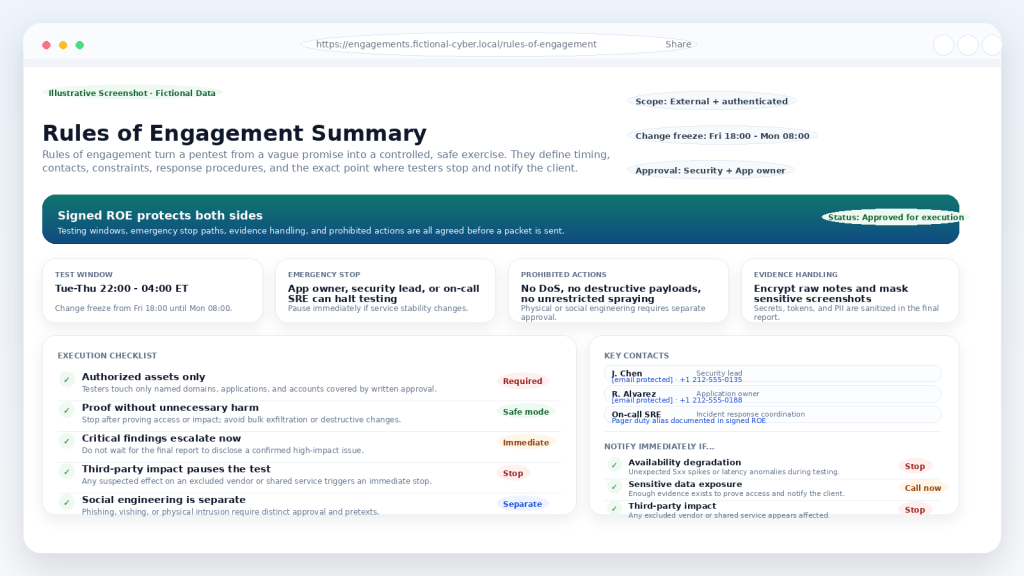

Rules of engagement: how testing stays safe and useful

Scope answers what can be tested. Rules of engagement answer how testing will happen. FedRAMP guidance says the rules of engagement and test plan should describe the target systems, scope, constraints, notifications and disclosures, testing periods, relevant personnel, incident response procedures, and evidence handling requirements [3].

In practice, a useful rules-of-engagement document should spell out:

• Testing windows, blackout periods, and any change freezes.

• Primary contacts, backup contacts, and an emergency stop path.

• Rate limits, production safeguards, and “prove it without harming it” expectations.

• Prohibited actions, including denial-of-service, destructive payloads, and anything touching excluded third parties.

• Notification thresholds for critical findings and service-impact concerns.

• How screenshots, secrets, personal data, and raw evidence will be handled, stored, and sanitized.

Figure 2. Illustrative screenshot: a fictional rules-of-engagement summary covering timing, stop conditions, contacts, and notification triggers.

What a pentest does not cover

Just as important as scope is knowing what a pentest does not do by default. A pentest is not a general-purpose security verdict. It is a focused exercise that answers a narrower question well.

• It does not prove there are no vulnerabilities left. It only proves what testers were able to validate inside the agreed scope and time window.

• It does not replace continuous vulnerability management, secure configuration, or recurring scanning.

• It does not automatically test your SOC, incident response process, or executive communications plan.

• It does not automatically include code review, phishing, vishing, physical intrusion, or long-term persistence unless those items are explicitly scoped.

• It does not replace architecture review, threat modeling, or regression testing after major changes.

| A practical framing: A good pentest is a sharp answer to a narrow question. It becomes weak only when people expect it to answer every security question at once. |

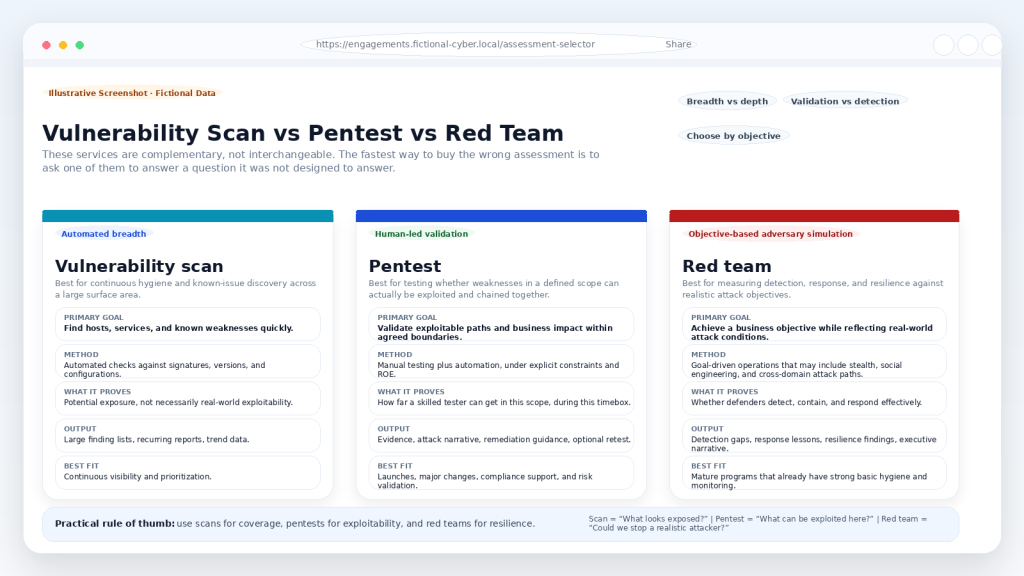

Vulnerability scan vs pentest vs red team

These services overlap, but they are not interchangeable. Vulnerability scanning is broad and automated. Pentesting is human-led and exploit-focused. Red teaming is objective-based and closer to adversary simulation. NIST guidance on red team exercises says they extend the objectives of penetration testing by examining organizational security posture and defensive capability, and may include both technology-based attacks and social engineering [4].

Figure 3. Illustrative screenshot: a simple comparison of vulnerability scans, pentests, and red team exercises.

• Vulnerability scan: broad, repeatable, and useful for continuous hygiene. It is best for identifying known weaknesses quickly across a large surface area.

• Pentest: human-led, scoped, and designed to validate exploitability and business impact inside a defined environment.

• Red team: objective-driven and closer to a realistic adversary simulation. It is best for measuring detection, response, escalation, and resilience.

For many organizations, the right ladder is simple: use scans for continuous coverage, pentests for exploitability validation, and red teams for resilience testing once the basics are already in place.

Before you buy a pentest, ask these six questions

1. Which exact hosts, domains, applications, APIs, and cloud assets are in scope?

2. What is explicitly out of scope, including every third-party dependency?

3. Is the engagement black-box, gray-box, or white-box, and which credentials will be provided?

4. Which actions are prohibited, and what are the stop conditions?

5. How will critical findings be escalated during the test, and who can halt testing?

6. Is retesting included, and what deliverables will the client receive at the end?

Conclusion

Do not ask whether a pentest covers everything. Ask whether the scope and rules of engagement match the business question you actually need answered.

If you need broad, recurring visibility, run vulnerability scanning. If you need to know whether weaknesses in a defined environment are truly exploitable, run a pentest. If you need to know whether a realistic attacker could hit a business objective and whether your defenders would catch it, plan a red team.

The clearer the scope and rules are before the first packet is sent, the more useful the result will be when the report lands on your desk.

References

[1] NIST CSRC Glossary, “Vulnerability Scanning.”

[2] NIST CSRC Glossary, “penetration testing.”

[3] FedRAMP Penetration Test Guidance, Rules of Engagement / Test Plan sections.

[4] NIST SP 800-53 Rev. 5, CA-8(2) Red Team Exercises.